AMD said on Tuesday its most-advanced GPU for artificial intelligence, the MI300X, will start shipping to some customers later this year.

AMD’s announcement represents the strongest challenge to Nvidi which currently dominates the market for AI chips with over 80% market share, according to analysts.

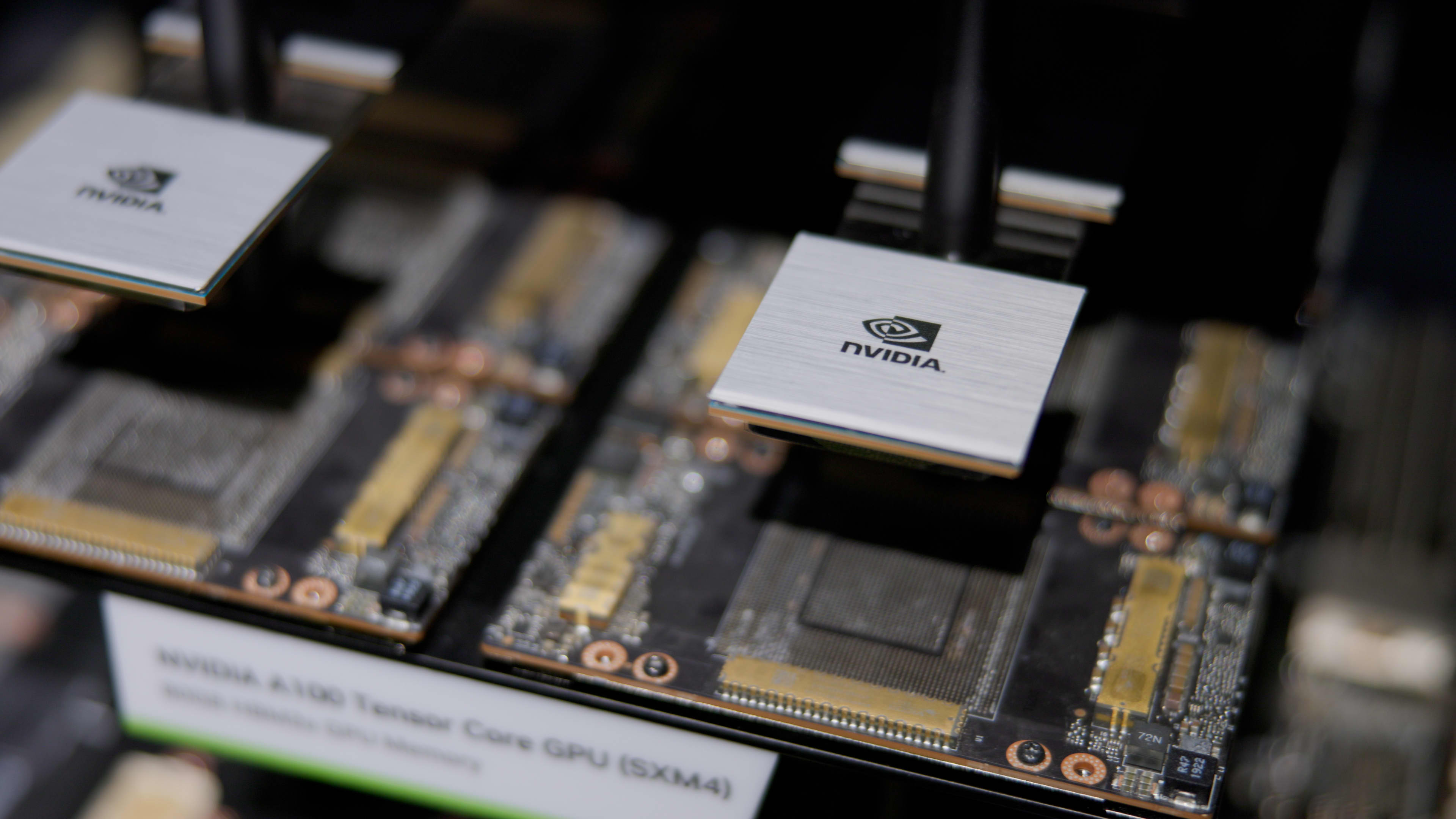

GPUs are chips used by firms like OpenAI to build cutting-edge AI programs such as ChatGPT.

If AMD’s AI chips, which it calls “accelerators,” are embraced by developers and server makers as substitutes for Nvidia’s products, it could represent a big untapped market for the chipmaker, which is best known for its traditional computer processors.

AMD CEO Lisa Su told investors and analysts in San Francisco on Tuesday that AI is the company’s “largest and most strategic long-term growth opportunity.”

“We think about the data center AI accelerator [market] growing from something like $30 billion this year, at over 50% compound annual growth rate, to over $150 billion in 2027,” Su said.

While AMD didn’t disclose a price, the move could put price pressure on Nvidia’s GPUs, such as the H100, which can cost $30,000 or more. Lower GPU prices may help drive down the high cost of serving generative AI applications.

AI chips are one of the bright spots in the semiconductor industry, while PC sales, a traditional driver of semiconductor processor sales, slump.

Last month, AMD CEO Lisa Su said on an earnings call that while the MI300X will be available for sampling this fall, it would start shipping in greater volumes next year. Su shared more details on the chip during her presentation on Tuesday.

“I love this chip,” Su said.

AMD said that its new MI300X chip and its CDNA architecture were designed for large language models and other cutting-edge AI models.

“At the center of this are GPUs. GPUs are enabling generative AI,” Su said.

The MI300X can use up to 192GB of memory, which means it can fit even bigger AI models than other chips. Nvidia’s rival H100 only supports 120GB of memory, for example.

Large language models for generative AI applications use lots of memory because they run an increasing number of calculations. AMD demoed the MI300x running a 40 billion parameter model called Falcon. OpenAI’s GPT-3 model has 175 billion parameters.

“Model sizes are getting much larger, and you actually need multiple GPUs to run the latest large language models,” Su said, noting that with the added memory on AMD chips developers wouldn’t need as many GPUs.

AMD also said it would offer an Infinity Architecture that combines eight of its M1300X accelerators in one system. Nvidia and Google have developed similar systems that combine eight or more GPUs in a single box for AI applications.

One reason why AI developers have historically preferred Nvidia chips is that it has a well-developed software package called CUDA that enables them to access the chip’s core hardware features.

AMD said on Tuesday that it has its own software for its AI chips that it calls ROCm.

“Now while this is a journey, we’ve made really great progress in building a powerful software stack that works with the open ecosystem of models, libraries, frameworks and tools,” AMD president Victor Peng said.

Source: CNBC